Client

Krune.io*

Team

1 PM, 8 Engineers, 1 Security Lead, 1 Data Scientist

Role

Lead Product Systems Designer

Scope

Centralize shadow AI usage into a secure, multi-tenant platform

TL;DR

Krune.io evolved from a fragmented “chatbot experiment” into governed AI infrastructure built for enterprise scale. The core problem wasn’t UI; it was risk, control, and operational consistency across teams.

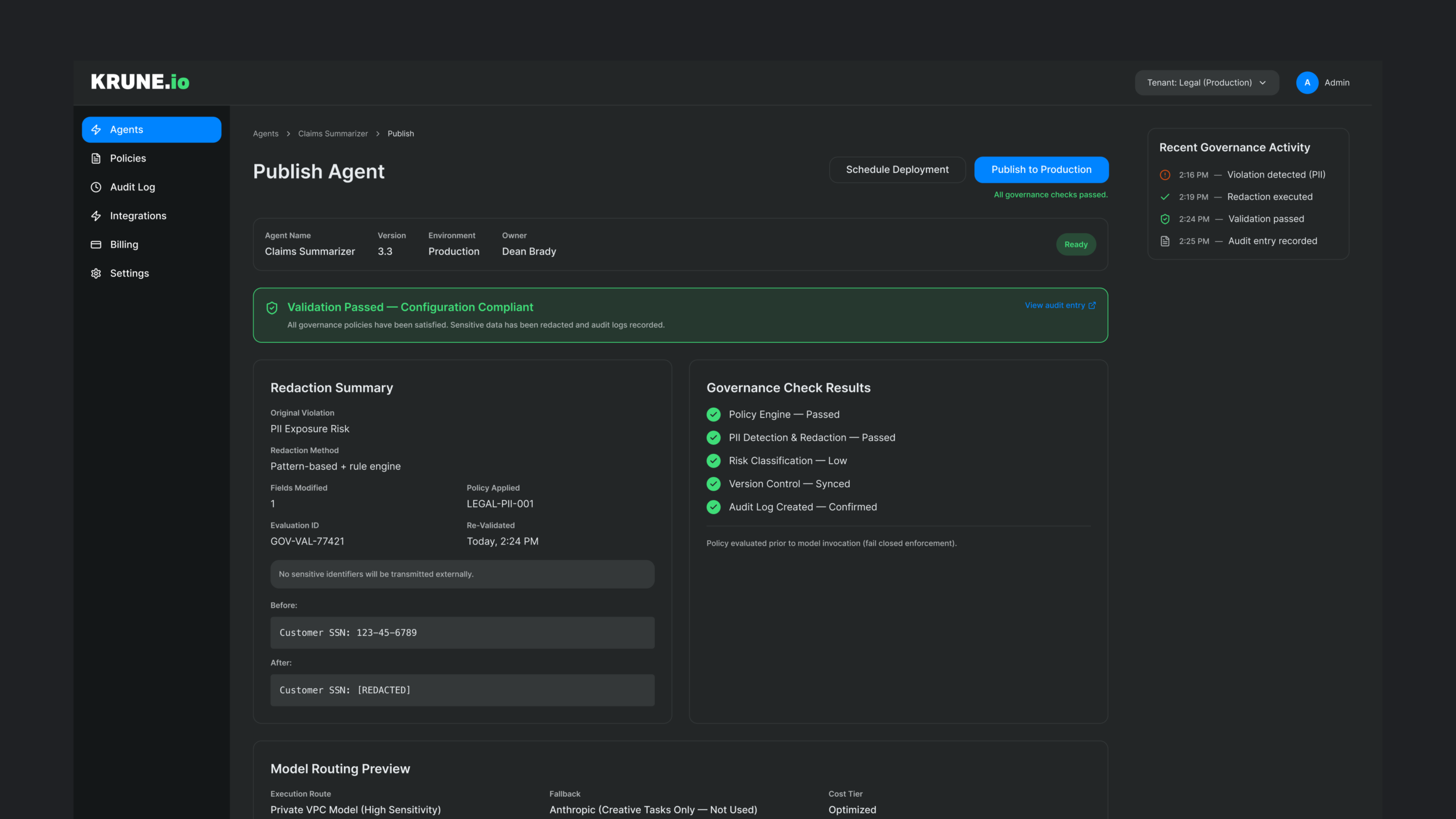

- 96% PII redaction coverage across governed AI interactions (measured via automated redaction tests + audit logs)

- 38% reduction in redundant API costs (measured via consolidated billing + duplicate tool deprecation)

What I owned end-to-end

- Policy-first system architecture (governance before model execution)

- Admin configuration model (policies, tenants, approvals, versioning)

- Trust and verification UX patterns (source citation, response confidence signals)

- Design-to-engineering schema mapping to reduce implementation ambiguity

Problem Space and Constraints

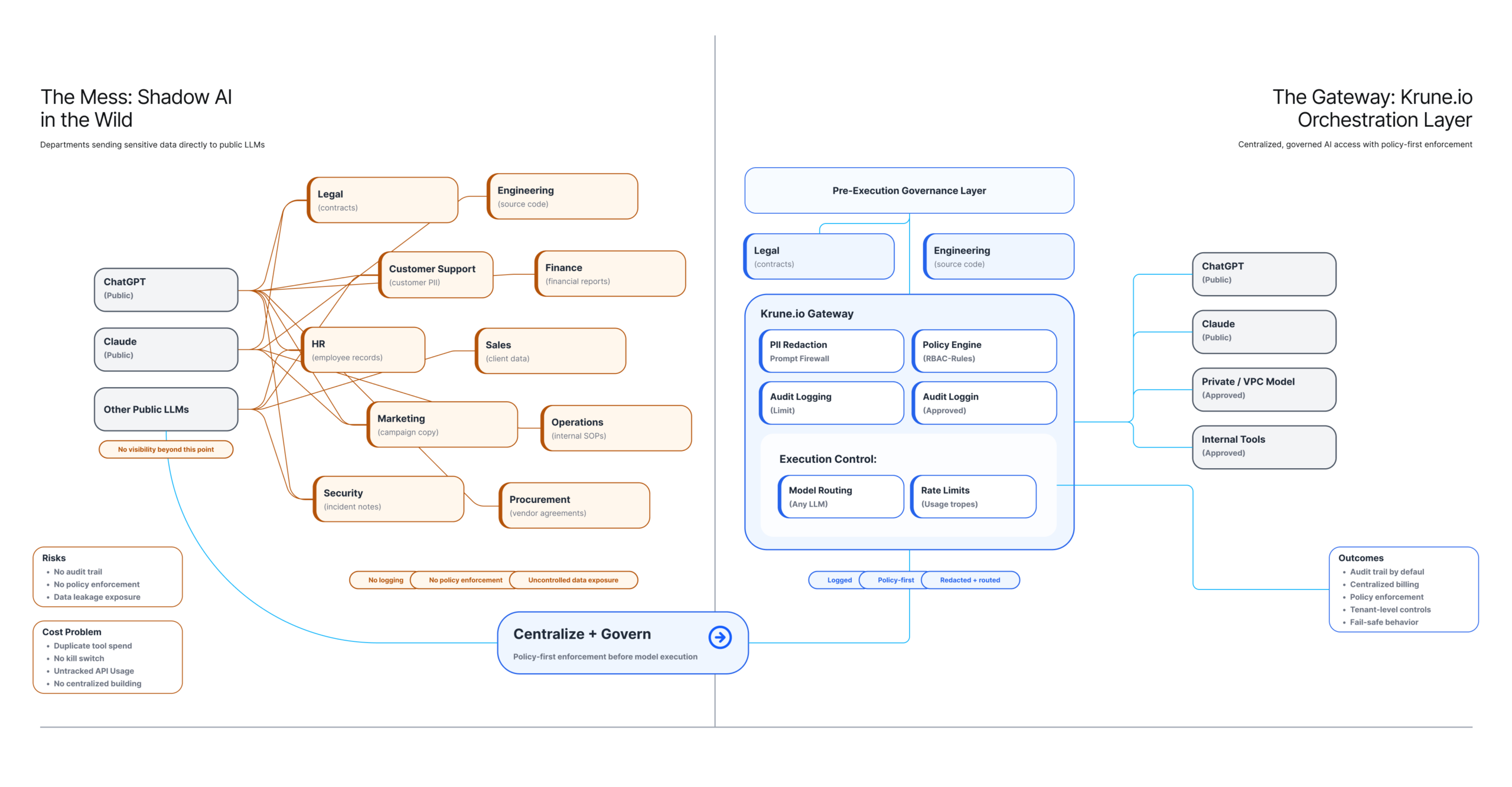

Employees were using public LLM tools with sensitive internal data, proprietary code, legal drafts, and customer information without enforceable controls.

Core risks:

- No auditability of prompts, responses, or outbound data

- No centralized policy enforcement or emergency kill switch

- Fragmented billing and uncontrolled API consumption

- Inconsistent governance across departments

Product complexity

- Department variance: strict compliance workflows vs exploratory creative workflows

- Model churn: the business wanted the ability to switch providers without re-training users

- Trust gap: users needed to understand when outputs were grounded in internal sources vs generative

Technical and compliance constraints

- Integrations: SharePoint, Jira, Confluence, internal services

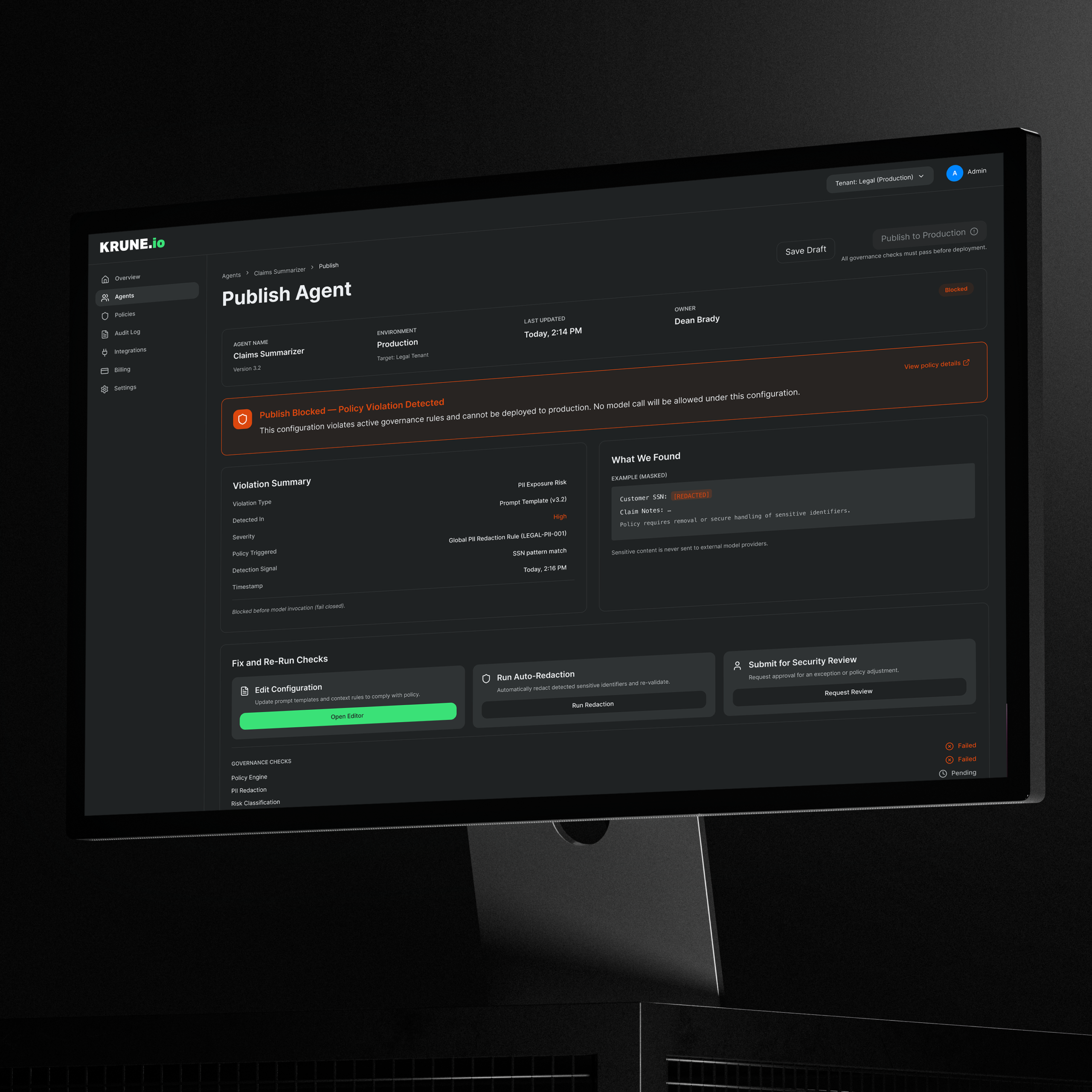

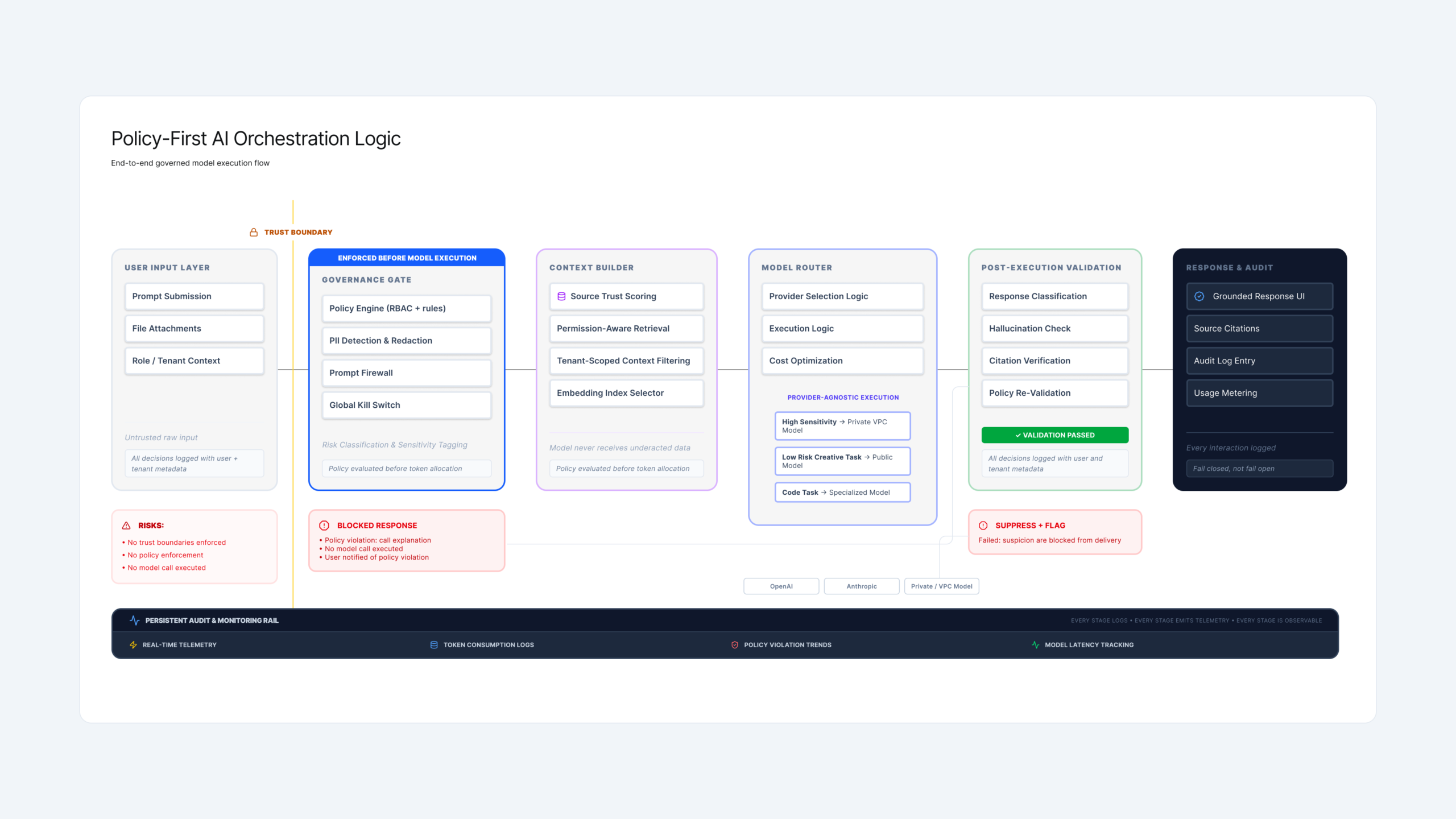

- Policy enforcement: required to happen before prompts reached external models

- Standards alignment: ISO/IEC 42001 considerations and internal security review requirements

Discovery and Strategy

The early instinct was to redesign the chat UI. Discovery revealed the real issue was not interface quality, it was orchestration, governance, and systemic risk.

Key insight:

A strong chat experience is irrelevant if the system cannot enforce policy, prove provenance, or contain risk. Governance had to be treated as architecture, not as a downstream feature layered onto a chatbot.

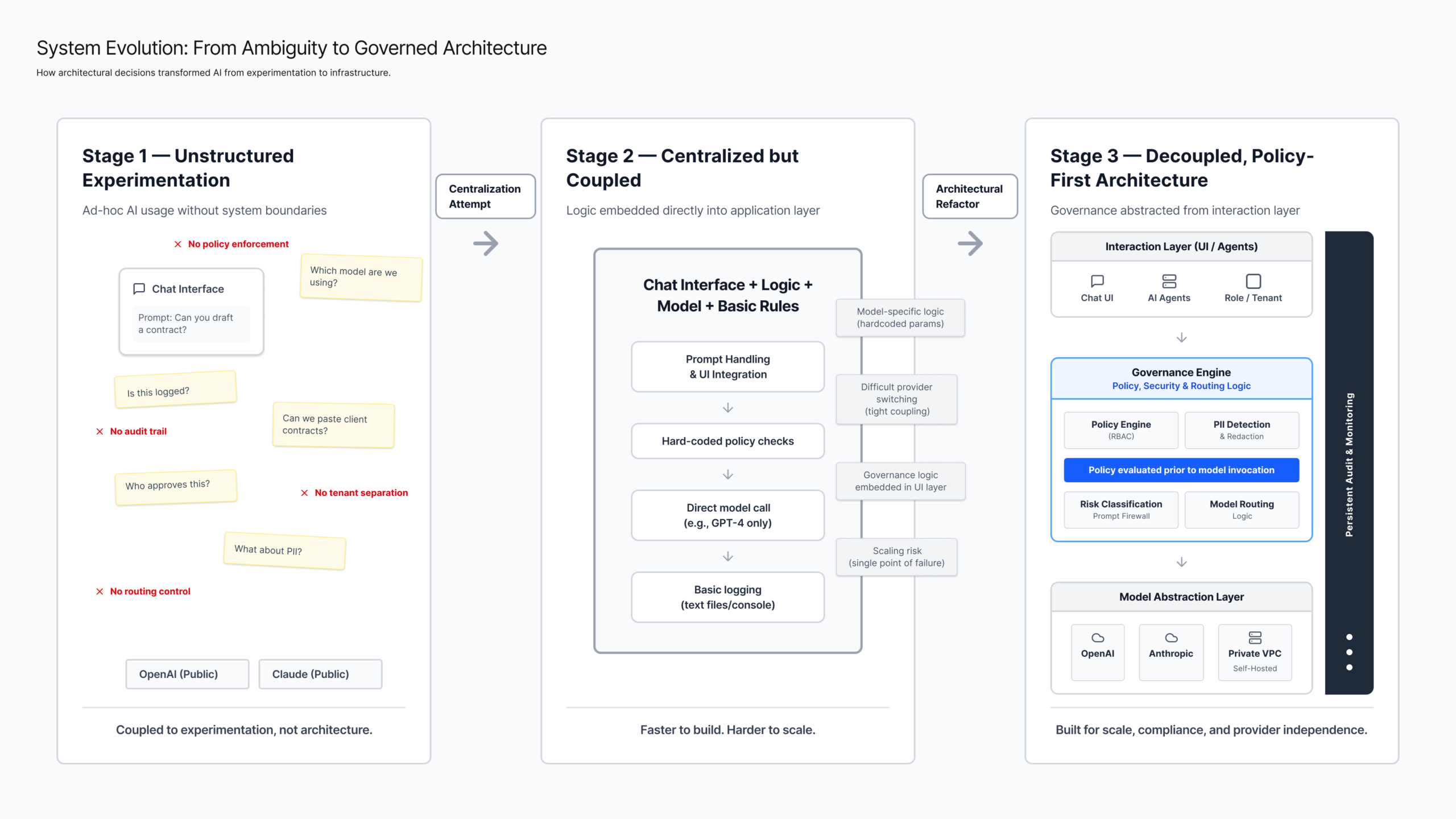

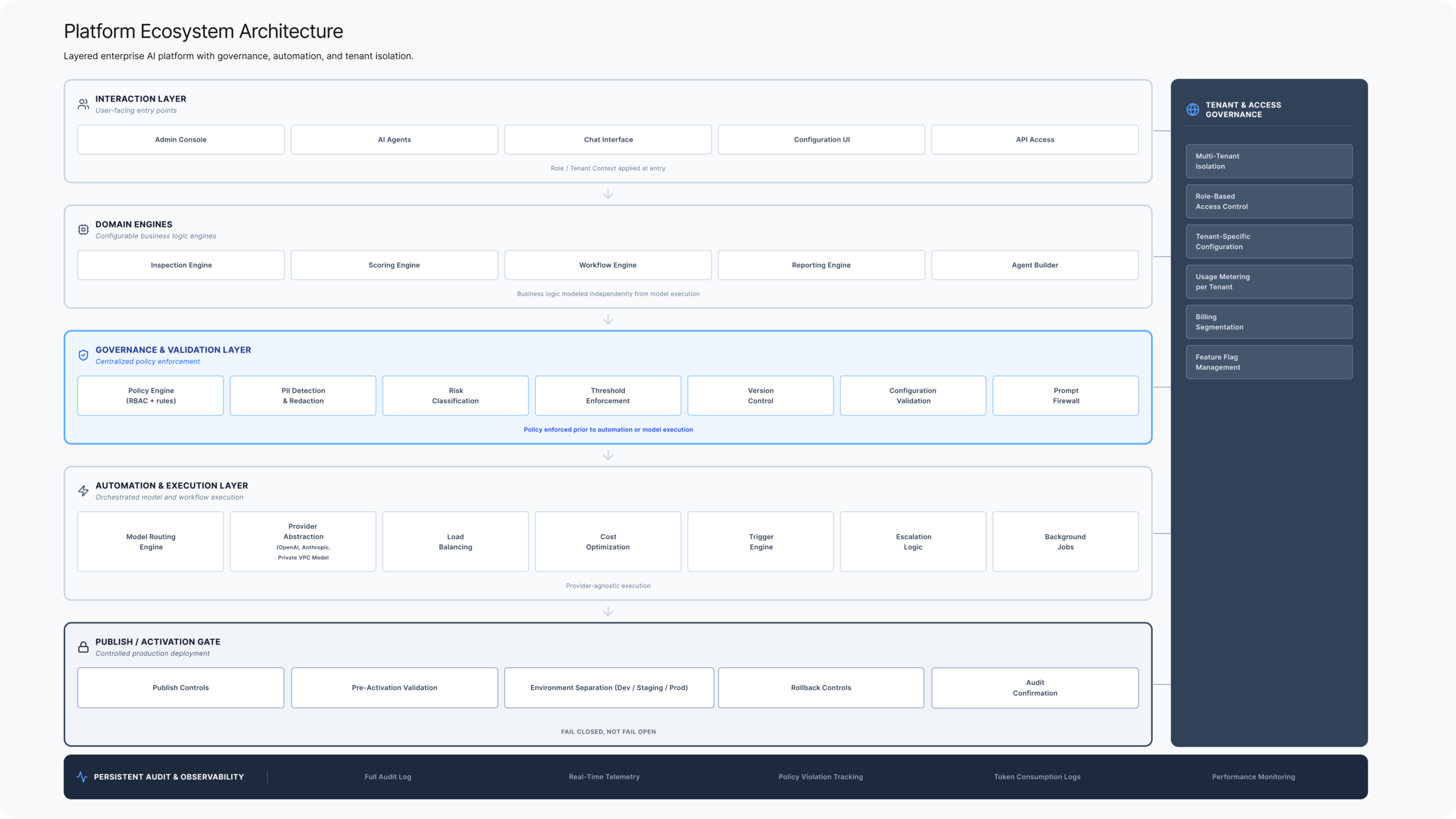

We decoupled the architecture into three layers:

- Interaction Layer: where users ask and consume

- Logic Layer: orchestration, retrieval, model selection, and routing

- Governance Layer: redaction, approvals, audit logging, versioning, and kill switch

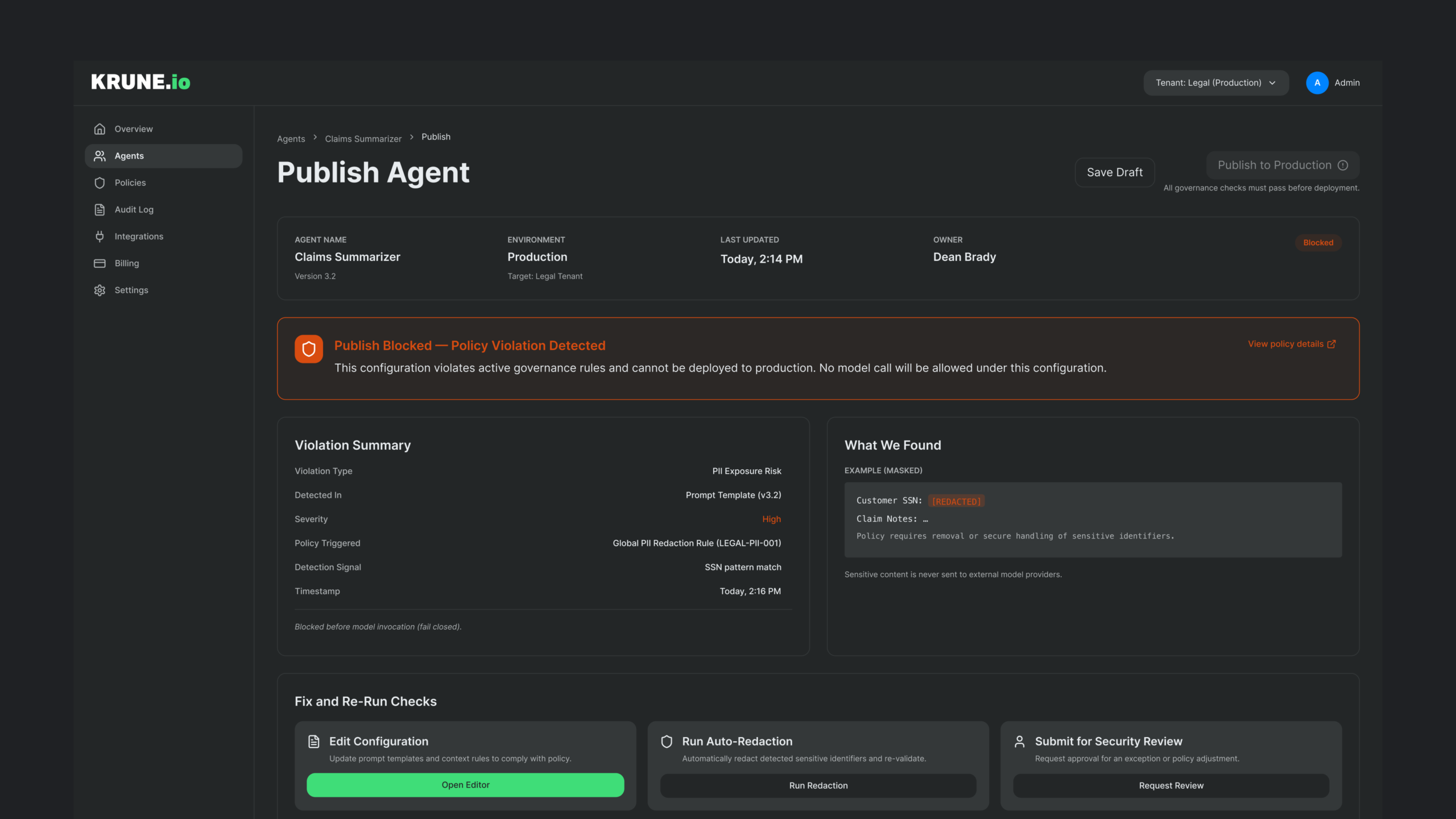

Strategic decision: Policy-first architecture

Instead of configuring agents individually, I proposed a global governance rail that evaluates prompts, context, and permissions before any model invocation occurs. Policy is enforced prior to token allocation — not retroactively after output generation.

North Star:

Integrity over convenience. If the system cannot verify sources or a prompt violates policy, it fails safely, logs the event, and explains why.

Execution Reality

This is where architectural decisions translated into enforceable system constraints.

Trade-off: intentional friction

We added controlled friction to prevent long-term liability:

- New agent creation required a Security Data Map approval step.

- This introduced intentional friction, preventing ungoverned agents from being deployed.

Governance checkpoints that prevented drift

I designed a prompt and policy versioning workflow:

- Version history with “last known good” rollback

- Change diffs for policy and prompt edits

- A clear release state: draft → review → approved → active

Collaboration model that reduced engineering ambiguity

To reduce back-and-forth, I created a system schema in Figma mapping:

- UI configuration components → API parameters

- Tenant-level overrides → policy evaluation behavior

- Validation states → user-facing error and resolution paths

The Solution

Early product direction focused on feature velocity without formal architectural guardrails. This required treating governance as a first-class architectural layer, not a feature.

My role was to:

- Translate strategic product direction into structured system models

- Identify coupling risks early

- Prevent governance logic from being embedded directly into feature logic

- Guide engineering through implementation trade-offs

Through iteration, the system evolved from:

Unstructured feature ideas

→ Coupled logic layers

→ Governed, decoupled architecture

This reduced implementation drift and improved clarity during sprint execution.

Without separating validation from automation, we would have embedded governance directly into scoring logic, creating long-term brittleness. By decoupling these layers early, we reduced engineering rework, prevented governance drift, and preserved long-term system extensibility.

The Solution Ecosystem

Krune.io operated as a governed orchestration layer across tenants, data systems, and model execution.

Multi-tenant governance

- Super-admin view across organizations

- Tenant-level overrides while maintaining global guardrails

- Policy inheritance model (global → tenant → agent)

Integration stack

- Retrieval from internal systems without persisting PII in external providers

- Centralized logging and billing across teams and tools

Impact and Reflection

The final ecosystem included:

Operational impact

- Reduced time-to-launch for internal AI tools from 4 weeks to 48 hours (measured by request-to-ship cycle time)

- Reduced manual security review overhead by shifting checks into the platform (policy-first enforcement)

- Consolidated vendor usage and reduced duplicated tooling

Architectural Impact

- Model provider switching without UI or workflow changes — executed through a single configuration update with automated re-validation.

- Prevented policy-breaking prompts from leaving the system via pre-execution gating

- Standardized “trust and verification” patterns across future AI experiences

Leadership takeaways

- Governance is product. When treated as an add-on, it fails.

- The most important UX is often the failure state and the audit trail.

- Decoupling interaction from logic is what makes model-agnostic design real.